AI-driven algorithms on social media platforms and websites often recommend content that promotes unproven treatments, conspiracy theories, and false information.

As artificial intelligence (AI) continues to permeate our lives, a growing concern has emerged – the spread of misinformation, particularly in healthcare. Misinformation, fueled by AI, is a pressing issue that threatens the credibility of health information available online.

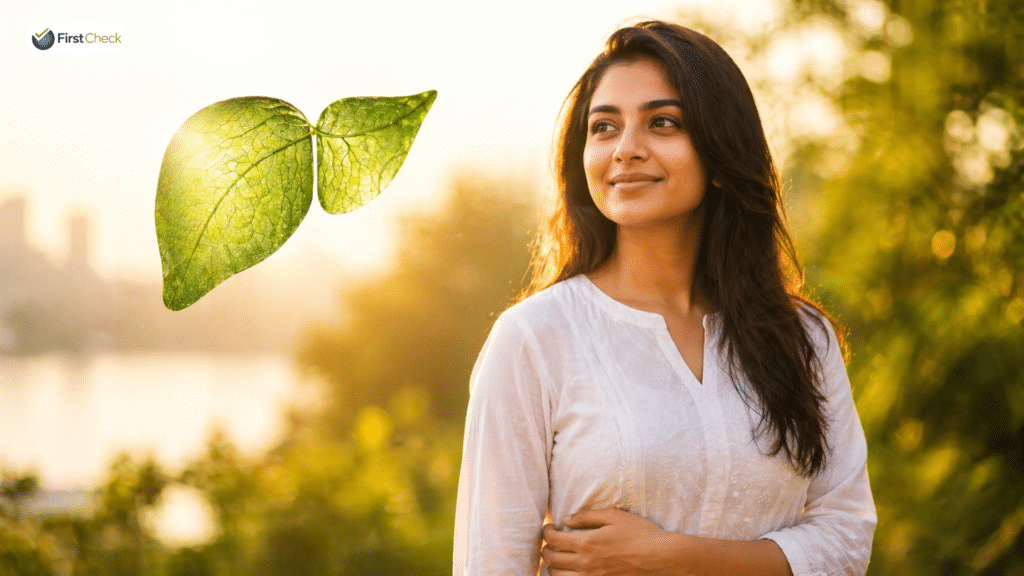

A widely circulated video on Facebook, claiming that the consumption of chia seeds soaked in water on a daily basis can cure diabetes and reduce abdominal fat, is a recent case in point. First things first, it’s an AI bot explaining the many benefits of chia seeds. Unfortunately, most people don’t see that; they mistake the human face and voice for an actual medical expert.

While AI holds the potential to revolutionise healthcare, it also has the potential to disseminate false or misleading information that can have detrimental consequences for public health. In the case of the viral Facebook video, it can be disastrous for people with diabetes to stop medical treatment and pursue the “chia seeds remedy” as there is no scientific evidence to support the social media claim.

As far as claims about weight loss are concerned, it’s important to note that hydrated chia seeds absorb water within the stomach, resulting in a feeling of fullness and reduced hunger for a certain period. However, that doesn’t automatically lead to weight loss. It’s best to consult your doctor before making any drastic dietary changes.

A recent thought-provoking study looked at how generative AI is enabling users to generate harmful eating disorder content. Another concern is how AI-powered chatbot can misinterpret symptoms and suggest a less severe condition when a user may actually be experiencing a medical emergency. Such incidents can delay timely medical intervention, causing harm to individuals.

During the COVID-19 pandemic, AI-driven algorithms on social media platforms and websites have been known to recommend content that promoted unproven treatments, conspiracy theories, and false information about the virus. How do we uphold trust in the face of AI-generated medical recommendations?

AI in health is a double-edged sword. While we tap into its immense potential for healthcare enhancement, it’s important to address the perils of misinformation it can spread. The speed at which AI can amplify false, unscientific health information calls for a proactive approach involving tech firms, regulators, and individuals. Collaborative efforts are the need to the hour in order to harness AI’s many benefits, while minimising potential harm to public health.